How not to increase the customer experience scores

It’s “good news, bad news” time for measuring customer experience. The good news is that some people have found really quick and easy ways to increase customer scores. The bad news is that those creative solutions can be catastrophic for the business and ultimately the people themselves.

We’ll look at the reasons why it happens and the consequences in a moment. Firstly though, I suspect we’re all agreed that for any organisation to improve it needs to measure the things that matter, not what is convenient. They will use a combination of quantitative and qualitative feedback from customers and employees to influence the right change and investment decisions.

However, the pressure for better and better metrics can easily lead to gaming of the customer experience scores and measurement system. The following examples are ones I’ve genuinely come across in recent times. I share them with you to illustrate what can happen and to hopefully prompt a sense-check that it’s not happening in your business.

- Misleading respondents: Net Promoter Score and others like it have their place. Each method has its own critical nuances that require a severe ‘handle with care’ advisory. So what certainly doesn’t help is where those carrying out the surveys have been told to, or are allowed to, manipulate the scoring system. In other words, when asking for an NPS (recommendation) number they tell the customer that “A score of 0-6 means the service was appalling, 7 or 8 is bad to mediocre and 9 or 10 is good”. And hey presto, higher NPS.

- Cajoling: I’ve also listened-in to research agencies saying to customers “Are you sure it’s only an eight, do you mean a nine? There’s hardly any difference anyway”. Maybe not to the customer there’s not but it’s very significant in the final calculation of the score. Or, in response to a customer who is trying to make up their mind, “You said it was good so would that be ten maybe, or how about settle for nine?”. More good scores on their way.

- Incentivising customers: the Board of a franchised operation couldn’t understand why its customer scores were fantastic but it’s revenue was falling off a cliff. It turned out that if a customer wanted to give anything other than a top score in the survey they were offered a 20% discount next time they came in-store in return for upgrading their score to a 9 or 10. Not only that, but the customers got wise to it and demanded discounts (in return for a top score) every other time in future too as they “know how the system works”.

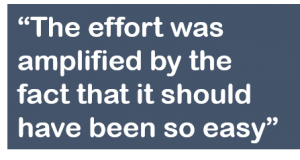

- Responses not anonymised: too often, the quest for customer feedback gets hijacked by an opportunity to collect customer details and data. I’ve seen branch managers stand over customers while they fill in response forms. Receipts from a cafe or restaurant invite you to leave feedback using a unique reference number that customers understandably think could link their response to the card details and therefore them. Employee surveys that purport to be anonymous but then ask for sex, age, length of service, role – all things that make it easy to pinpoint a respondent especially in a small team. So it’s not surprising that that unless there is been a cataclysmic failure, reponses will be unconfrontational, generically pleasant and of absolutely no use at all.

- Slamming the loop shut: Not just closing it. It’s the extension of responses not being anonymous. Where they are happy to share their details and to be contacted, following up good or bad feedback is a brilliant way to engage customers and employees. But I’ve also seen complaints from customers saying the branch manager or contact centre manager called them and gave them a hard time. Berating a customer for leaving honest feedback is a brilliant way to hand them over to a competitor.

- Comparing apples with potatoes: It’s understandable why companies want to benchmark themselves against their peer group of competitors or the best companies in other markets. It’s easy to look at one number and say whether it’s higher or lower than another. But making comparisons with other companies’ customer scores without knowing how those results are arrived at will be misleading at best and at worst make a company complacent. There are useful benchmarking indices such as those from Bruce Temkin whose surveys have the volume and breadth to minimise discrepancies. But to compare one company’s NPS or Satisfaction scores in the absence of knowing at what point in the customer journey or how their customers were surveyed can draw some very unreliable conclusions.

- Selective myopia: Talking of benchmarking, one famous sector leader (by market share) makes a huge fanfare internally of having the highest customer satisfaction scores of its competitors. Yet it conveniently ignores one other equally famous competitor who has significantly higher customer scores. The reason is a flawed technicality in that they have identical products, which customers can easily switch to and from but one operates without high street stores (yet it makes other branded stores available to use on its behalf). First among unequals.

- Unintended consequences: a leadership team told me that despite all the complaints about the service, its staff didn’t need any focus because they were highly engaged. The survey said so. However, talking to the same employees out on the floor, they said it was an awful place to work. They knew what was going wrong and causing the complaints but no-one listened to their ideas. They didn’t know who to turn to so they could help a customer and their own products and services were difficult to explain. Why then, did they have such high engagement scores? Because the employees thought (wrongly, as it happens) that a high index was needed if they stood any chance of getting a bonus so they ticked that box whenever the survey came round. The reality was a complete lack of interest or pride in their job (some said they would rather tell friends they were unemployed) and no prizes for guessing what that meant for customers’ experiences.

A downward spiral – the consequences of gaming customer scores

Of course, metrics are necessary but their value is only really insightful when understood in the context of the qualitative responses. The consequences of getting that balance wrong are easy to understand but the reasons why are more complex. That doesn’t mean they shouldn’t be addressed.

The damaging impact of the complacency comes from believing things are better than they are. If a number is higher than it was last time, that’s all that matters, surely. Wrong. The business risk is that investments and resources will continue to be directed to the things that further down the line will become a low priority or simply a wasted cost in doing the wrong things really well.

What’s just as damaging is the impact the gaming has on people. The examples I’ve mentioned here are from some of the largest organisations in their respective markets, not small companies simply over-enthusiastically trying to do their best. Scale may be part of the problem, where ruling by metrics is the easiest way to manage a business. That is one of the biggest causes of customer scores being over-inflated; the pressure managers put on their team to be rewarded by relentlessly making things better as measured by a headline customer number, however flawed that is.

It’s a cultural thing. Where gaming of the numbers does happen, those who do it or ask for it to happen may feel they have little choice. If people know there are smoke and mirrors at work to manipulate the numbers or if they are being asked to not bother about what they know is important, what kind of a place must that be to work in? The good talent won’t hang around for long.

For me, beyond being timely and accurate there are three criteria that every customer measurement framework must adhere to.

- Relevant: they must measure what’s most important to customers and the strategic aims of the business

- Complete: the measures must give a realistic representation of the whole customer journey, not just specific points weeks after they happened

- Influential: CX professionals must be able to use the qualitative and quantitative insights to bring about the right change.

As ever, my mantra on this has always been to get the experience right first then the numbers will follow. I’d urge you to reflect on your own measurement system and be comfortable that the scores you get are accurate and reliable.

It’s also worth asking why would very good and capable people feel they had to tell a story that sounds better than it is. Leaders and managers, your thoughts please…

Thank you for reading the blog, I hope you found it interesting and thought-provoking. I’d love to hear what you think so please feel free to add your comments below.

I’m Jerry Angrave, an ex-corporate customer experience practitioner and since 2012 I’ve been a consultant helping others understand how best to improve their customer experiences. If you’ve any questions about customer measurement or any other CX issue do please get in touch for a chat. I’m on +44 (0) 7917 718 072 or on email I’m [email protected].

Jerry Angrave

CCXP and a judge at the UK Customer Experience Awards